One of the technologies sometimes described as a key feature among social media listening solutions is the ability to map how customers perceive your product. Most software does this using an automated sentiment analysis tool promoted as a quick way to determine the attitude of the writer towards your product within the context of an overall conversation. Individual mentions (E.g. a post, or tweet, or status update) within a conversation are usually categorised as a simple positive, neutral or negative, allowing you to see how customer satisfaction develops over time. But does it really work?

Over the years we have observed some debate when it comes to sentiment analysis and its benefits. Although the automated processing of anything is generally quicker and more convenient, the downside is that data classification is rarely as accurate as human analysis, as a computer program has difficulty identifying sarcasm, subtleties in language and the meaning behind the actual language. This becomes even more of a problem when you are analysing complex emotions in conversations about health.

How automated sentiment analysis fails

Several sentiment analysis algorithms select data based on ‘trigger’ keywords found within the text and label them either positive or negative using natural language processing and text analysis. The tweets below were all picked up automatically by a leading social media monitoring tool in some of our studies of conversation among healthcare professionals, and labelled negative or positive due to the vocabulary within them. We looked at whether human analysis would necessarily label them in the same way.

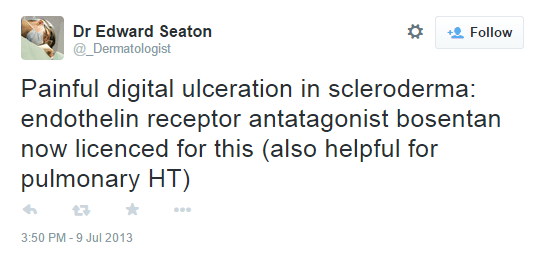

Figure 1: Tweet from Consultant Dermatologist in London, UK categorized as negative by automated sentiment analysis

Figure 1: Tweet from Consultant Dermatologist in London, UK categorized as negative by automated sentiment analysis

The post in Figure 1 was categorised as negative by the automated sentiment analysis system due to ‘painful’ being interpreted as a negative trigger word. However, human analysis would interpret this differently depending on the business questions being considered in the study. If the study was conducted on behalf of the brand Bosentan, then this post may be seen as positive. However, a competitor of Bosentan might consider the tweet to be a negative post since it reflects the competitive environment.

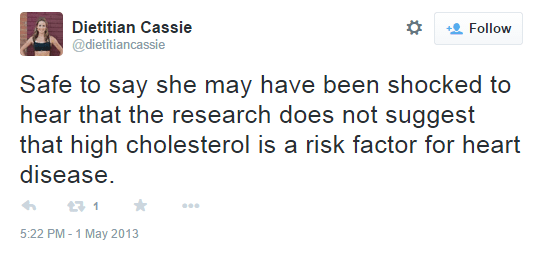

Figure 2: Tweet from dietician in USA categorized as negative by automated sentiment analysis.

Figure 2: Tweet from dietician in USA categorized as negative by automated sentiment analysis.

The example in Figure 2 is a tweet from a dietician. Her post is automatically classed as negative , including phrases such as ‘shocked’, ‘high cholesterol’, ‘risk factor’ and ‘heart disease’ – all of which are potentially ‘negative’ concepts. However, a human reading of the tweet reveals that in this case the author is referring to the ‘positive’ fact that the research in question does not suggest particularly negative implications.

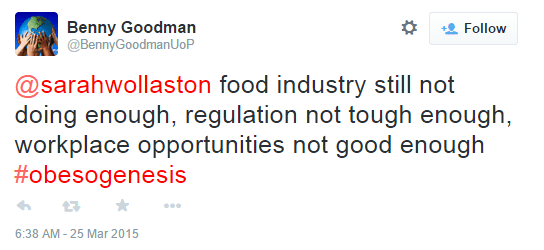

While automated sentiment analysis can alert the analyst to high-level developments the content needs to be examined in a multidimensional context to enable appropriate strategic insights to be provided. The tweet below was classified by automated sentiment analysis as negative and draws the analyst’s attention towards a potential issue worth investigating.

Figure 3: Tweet from nursing lecturer in Cornwall, UK categorized as negative by sentiment analysis

Figure 3: Tweet from nursing lecturer in Cornwall, UK categorized as negative by sentiment analysis

Is sentiment analysis more than just automatic categorization?

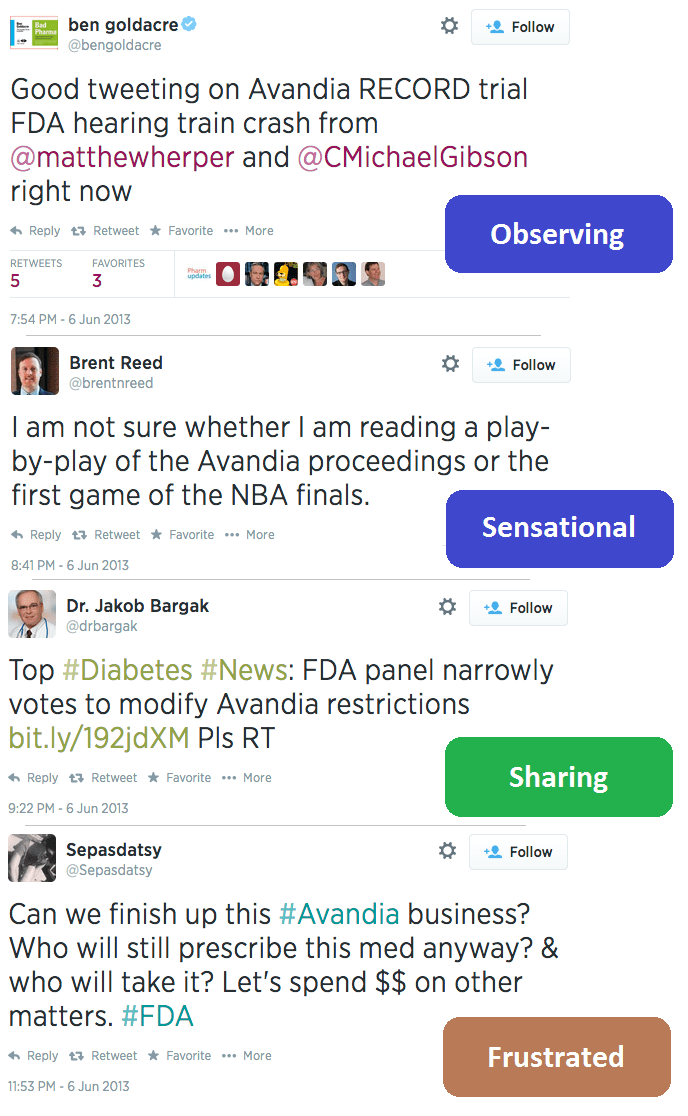

In past research projects we noticed a need to move beyond just positive/negative labels. In our ‘Digital Opinion Leaders in Diabetes’ study, we analyzed healthcare professional conversation around this topic. One of our key study questions was “How did HCPs respond to the FDA’s announcements regarding Avandia”. To answer this question we didn’t just want to look at positive/negative so we created a set of ‘action-oriented’ behavioral categories which would help us better answer the question. Here are some tweets we grouped into our newly created categories.

Understanding social media listening, your goals and the complex healthcare industry

All analysis of conversation should be in context of the objectives of the particular study, which can make it much harder for automated sentiment analysis to work effectively. It is important that human analysis is undertaken by those trained to understand the development of social media listening, the goals of the study and the intricate cultural and political situations behind the healthcare industry.

Creation Healthcare’s strength lies in the ‘human’ classification conducted by our qualified analysts and aided by Creation Pinpoint’s unique software. Our expert analysis means all mentions are placed into context (such as cultural, political, medical, economic, financial, legal, or regulatory) and we dig deeper to analyse what the discussion actually means to each of your stakeholders. Creation Healthcare has over 17 years of experience within the healthcare industry which means we can align our analysis with the strategic business goals as identified in the pre-study knowledge engineering meetings we have with our clients.

Before we classify posts based on their content we take a look at the author’s profile in depth to get to know the nature of their accounts: are they usually sarcastic, are they anonymous, are they credible, do they interact with their peers? These are all important questions that need to be answered before you include their opinions into a market research study.

Finally we understand the importance of segmenting data into logical and sensible ‘data buckets’. We see the need in some projects to know much more than whether a post is negative or positive. We create sub-topics and categorise content based on our clients’ goals to make it easier for them to digest and understand.

If you plan to make decisions based on your market research then you need to make sure that the data you get has been analysed against your business questions and the analysis is accurate; this is something that current automated analysis unfortunately can’t do.

If you want to understand more about social media healthcare professional market research then contact me to organise a free presentation.

By Katie Kennedy

By Katie Kennedy